Last updated on June 2, 2023

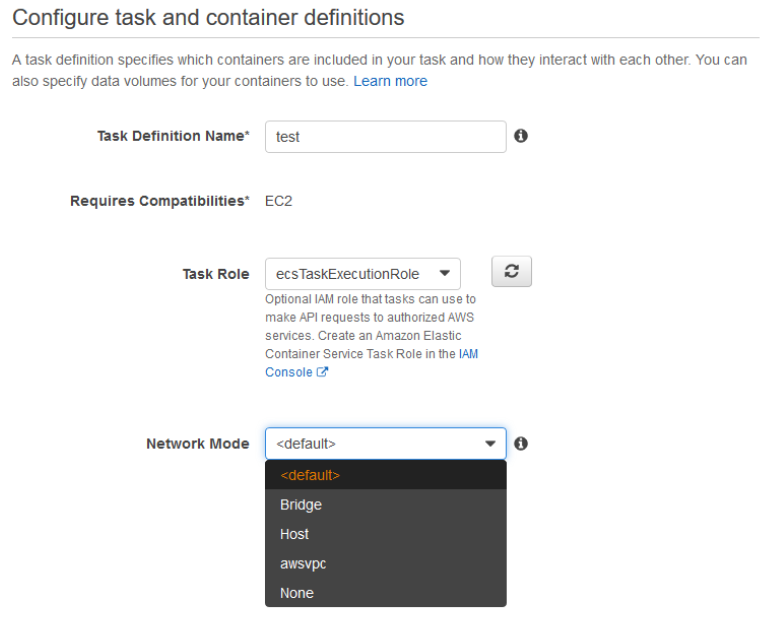

Amazon Elastic Container Service (ECS) allows you to run Docker-based containers on the cloud. Amazon ECS has two launch types for operation: EC2 and Fargate. The EC2 launch type provides EC2 instances as hosts for your Docker containers. For the Fargate launch type, AWS manages the underlying hosts so you can focus on managing your containers instead. The details and configuration on how you want to run your containers are defined on the ECS Task Definition which includes options on networking mode.

In this post, we’ll talk about the different networking modes supported by Amazon ECS and determine which mode to use for your given requirements.

ECS Network Modes

Amazon Elastic Container Service supports four networking modes: Bridge, Host, awsvpc, and None. This selection will be set as the Docker networking mode used by the containers on your ECS tasks.

Bridge network mode – Default

When you select the <default> network mode, you are selecting the Bridge network mode. This is the default mode for Linux containers. For Windows Docker containers, the <default> network mode is NAT. You must select <default> if you are going to register task definitions with Windows containers.

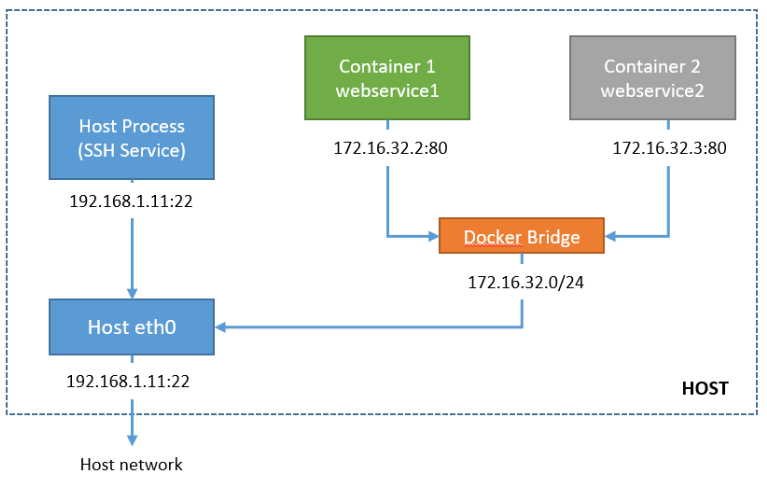

Bridge network mode utilizes Docker’s built-in virtual network which runs inside each container. A bridge network is an internal network namespace in the host that allows all containers connected on the same bridge network to communicate. It provides isolation from other containers not connected to that bridge network. The Docker driver handles this isolation on the host machine so that containers on different bridge networks cannot communicate with each other.

This mode can take advantage of dynamic host port mappings as it allows you to run the same port (ex: port 80) on each container, and then map each container port to a different port on the host. However, this mode does not provide the best networking performance because the bridge network is virtualized and Docker software handles the traffic translations on traffic going in and out of the host.

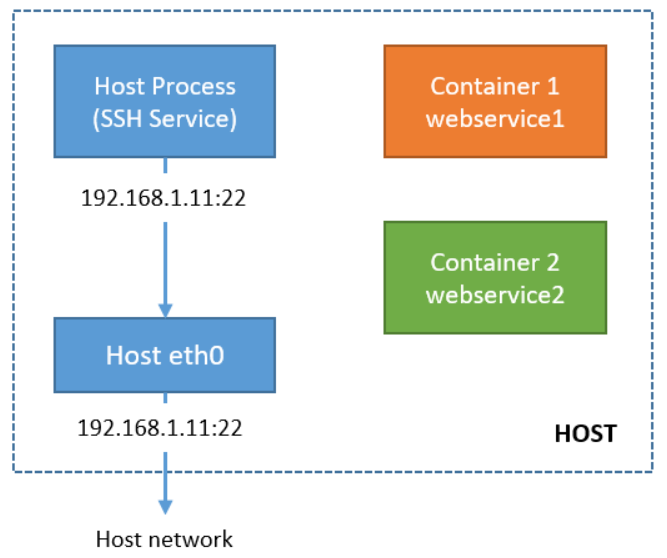

Host network mode

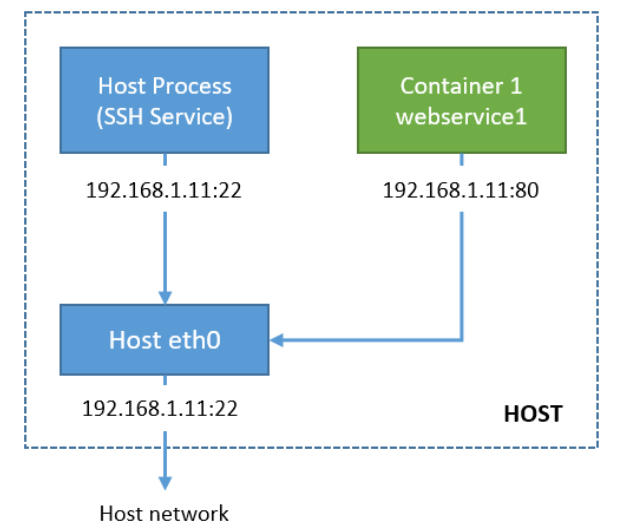

Host network mode bypasses the Docker’s built-in virtual network and maps container ports directly to your EC2 instance’s network interface. This mode shares the same network namespace of the host EC2 instance so your containers share the same IP with your host IP address. This also means that you can’t have multiple containers on the host using the same port. A port used by one container on the host cannot be used by another container as this will cause conflict.

This mode offers faster performance than the bridge network mode since it uses the EC2 network stack instead of the virtual Docker network.

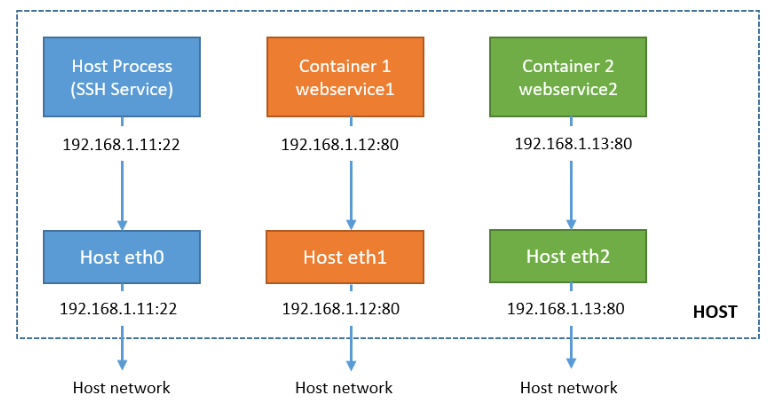

awsvpc mode

The awsvpc mode provides an elastic network interface for each task definition. If you have one container per task definition, each container will have its own elastic network interface and will get its own IP address from your VPC subnet IP address pool. This offers faster performance than the bridge network since it uses the EC2 network stack, too. This essentially makes each task act like their own EC2 instance within the VPC with their own ENI, even though the tasks actually reside on an EC2 host.

Awsvpc mode is recommended if your cluster will contain several tasks and containers as each can communicate with their own network interface. This is the only supported mode by the ECS Fargate service. Since you don’t manage any EC2 hosts on ECS Fargate, you can only use awsvpc network mode so that each task gets its own network interface and IP address.

None network mode

This mode completely disables the networking stack inside the ECS task. The loopback network interface is the only one present inside each container since the loopback interface is essential for Linux operations. You can’t specify port mappings on this mode as the containers do not have external connectivity.

You can use this mode if you don’t want your containers to access the host network, or if you want to use a custom network driver other than the built-in driver from Docker. You can only access the container from inside the EC2 host with the Docker command.

Sources:

https://docs.aws.amazon.com/AmazonECS/latest/developerguide/task_definition_parameters.html#network_mode

https://docs.aws.amazon.com/AmazonECS/latest/developerguide/task-networking.html

https://docs.aws.amazon.com/AmazonECS/latest/userguide/fargate-task-networking.html