Last updated on March 5, 2026

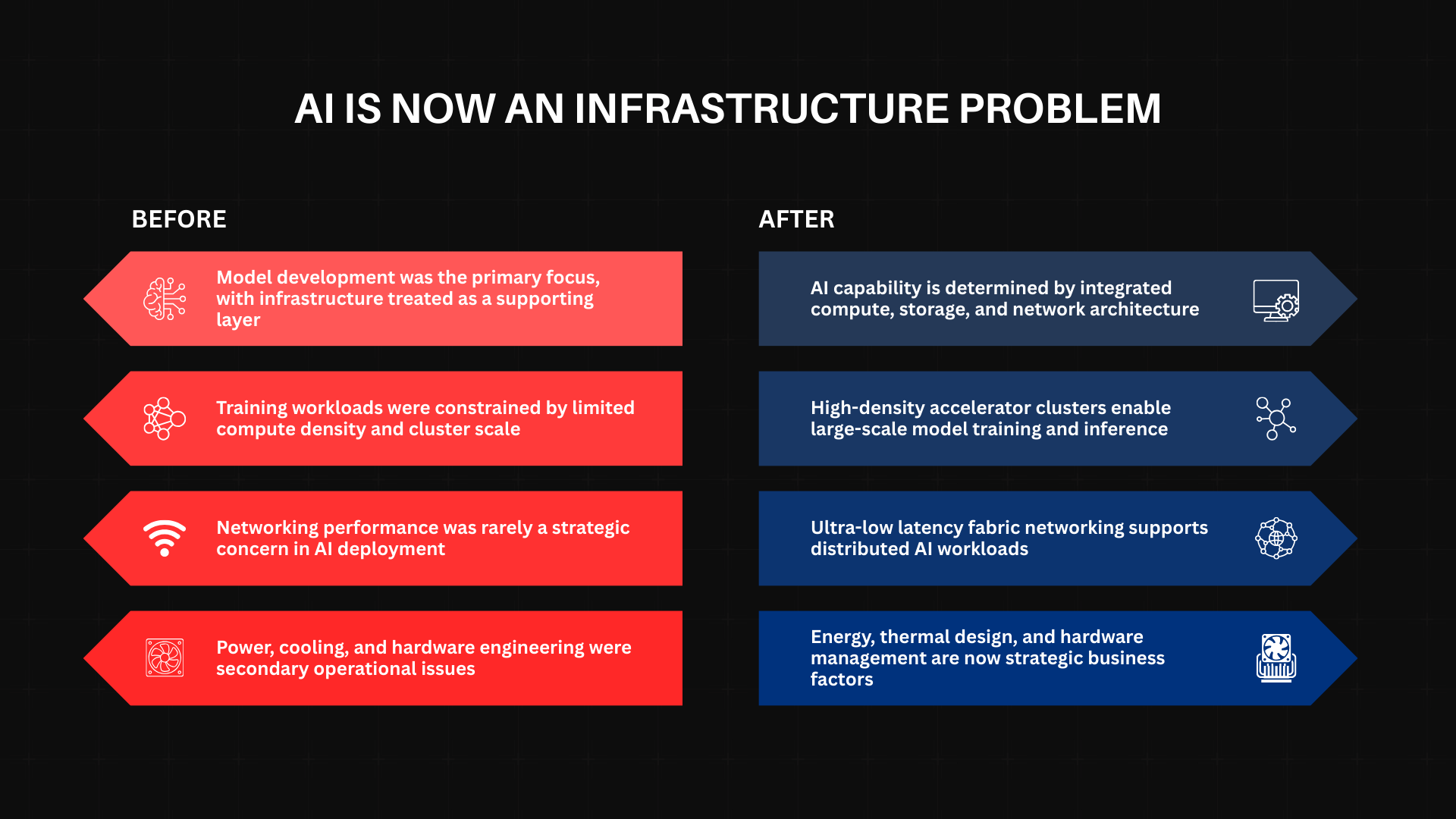

Artificial intelligence has shifted from being a software innovation challenge to becoming an infrastructure challenge. Organizations no longer struggle primarily with model design. Instead, organizations are increasingly challenged by compute density, energy consumption, networking scale, and operational complexity when deploying modern AI systems.

As large-scale generative AI systems become central to business strategy, infrastructure decisions now carry long-term economic consequences. This is the context in which AWS AI Factories emerged. First announced at AWS re:Invent, AWS AI Factories introduce a new economic model for enterprises that require large-scale AI infrastructure. Moreover, these organizations may struggle to rely entirely on public cloud environments because of sovereignty, regulatory, or latency constraints. It is about economics.

AI Infrastructure Is Capital Intensive by Design

Building independent AI infrastructure is no longer as simple as installing racks. Modern accelerators like the NVIDIA Blackwell (GB200/GB300 NVL72) and AWS Trainium3 platforms have pushed data center requirements past a “thermal wall.”

- The Cooling Crisis: A single Blackwell rack can consume and dissipate upwards of 120kW. Traditional air-cooling is insufficient; these systems require complex liquid-cooling loops and specialized heat exchange units.

- The Networking Tax: Distributed training requires sub-microsecond latency. Any inefficiency in the fabric such as improper EFA (Elastic Fabric Adapter) configuration directly reduces GPU utilization. In a cluster of thousands of GPUs, a 10% drop in utilization isn’t just a technical glitch; it’s a multi-million dollar annual financial leak.

- The Hardware Roadmap: While Trainium3 is the current standard for price-performance in 2026, the recent roadmap update for Trainium4 (optimized for agentic reasoning) has introduced a new cycle of procurement complexity that most internal IT departments are not equipped to manage.

The 30-Month Advantage: Time as a Financial Variable

In practice, the most significant cost in AI infrastructure is not only capital expenditure (CapEx), but also deployment velocity, since slower implementation directly affects business opportunity capture. A “Do-It-Yourself” (DIY) build-out of a hyperscale-grade AI cluster typically takes 18 to 30 months from planning and power procurement to production readiness. In the 2026 AI market, where model generations evolve every six months, a two-year delay is a terminal competitive disadvantage.

AWS AI Factories reshape this equation by delivering a “Private AWS Region” model. By providing a standardized, fully managed stack, AWS claims to reduce this timeline by up to 80%. Consequently, the economic multiplier becomes clear. Organizations that reach production 24 months earlier can capture data advantages and operational efficiencies that late adopters may struggle to recover.

Operational Complexity as a Recurring Liability

Infrastructure does not end at deployment. The “hidden” operational costs of high-density AI include:

- Firmware/Orchestration Synchronization: Managing the interplay between NVIDIA’s CUDA-X stack or AWS’s Neuron SDK and the underlying hardware.

- Staffing Opportunity Cost: Every engineer focused on power distribution or thermal management is an engineer not focused on building proprietary Agentic AI workflows.

- Risk Mitigation: AWS AI Factories utilize the AWS Nitro System to provide hardware-level isolation. In a DIY model, the burden of proving “sovereignty” to regulators falls entirely on the enterprise; in the Factory model, that security posture is “imported” from AWS’s proven compliance frameworks.

The Sovereign AI Middle Path

Meanwhile, for government agencies, financial institutions, and the defense sector, the public cloud is often a non-starter due to strict data residency mandates. However, building a sovereign cloud from scratch can be prohibitively expensive and slow.

AWS AI Factories offer a middle path: Hyperscale capability with local data control. In this model, the hardware resides in the customer’s data center, while management operations, security updates, and AI services such as Amazon Bedrock and the Amazon Nova 2 model family are delivered as a managed platform. As a result, organizations can run frontier-class models on-premises while maintaining compliance with local regulations.

When the Economics Align

Ultimately, AWS AI Factories make the strongest economic sense when AI becomes a core industrial capability rather than a short-term experimental project. For smaller organizations or short-term experiments, the public cloud remains the gold standard for flexibility. Therefore, for enterprises that view AI as their primary competitive engine over the next decade, restructuring infrastructure complexity through an AI Factory may become one of the most strategic technology decisions they can make.

|

Metric |

Public Cloud (Elastic) |

AWS AI Factory (Managed) |

DIY (Self-Built) |

|

Commitment |

Low / On-demand |

High (Multi-year) |

High (CapEx) |

|

Sovereignty |

Low |

High (Local) |

High (Local) |

|

Speed to Market |

Immediate |

Fast (Months) |

Slow (Years) |

|

Management |

AWS Managed |

AWS Managed |

Self-Managed |

Conclusion

The economics of AI infrastructure are no longer about “the cost of a GPU.” They are about the cost of time, the risk of obsolescence, and the burden of complexity. AWS AI Factories do not eliminate the high costs of AI; they restructure them into predictable, managed certainties. For the enterprise that cannot afford to wait 30 months to join the AI race, that restructuring is the difference between leading the market and being managed by it.

References

-

- https://aws.amazon.com/about-aws/whats-new/2025/12/aws-ai-factories/

- https://www.aboutamazon.com/news/aws/aws-data-centers-ai-factories/

- https://aws.amazon.com/about-aws/global-infrastructure/ai-factories/

- https://www.hpcwire.com/aiwire/2025/12/02/aws-introduces-ai-factories-to-support-sovereign-large-scale-ai-deployments/