The AI-300 Microsoft Certified Machine Learning Operations MLOps Engineer Associate certification exam is designed for professionals who want to demonstrate their ability to implement and manage infrastructure for machine learning operations (MLOps) and generative AI operations (GenAIOps) on Azure, collectively known as AI operations (AIOps). Candidates are expected to have hands-on experience building and operating traditional machine learning models as well as deploying, evaluating, monitoring, and optimizing generative AI applications and agents.

A strong foundation in data science is expected for this role, including proficiency in Python and a solid understanding of DevOps fundamentals. Candidates should be comfortable working with tools and workflows such as GitHub Actions and command-line interfaces (CLIs). In addition, candidates should have knowledge and practical experience in machine learning operations (MLOps), particularly when working with the following technologies:

- Machine Learning.

- Foundry.

- GitHub Actions.

- Infrastructure-as-code (IaC) practices with Bicep and Azure CLI.

For more information about the AI-300 exam, you can check out this exam skills outline. This study guide will provide comprehensive review materials to help you pass the exam successfully.

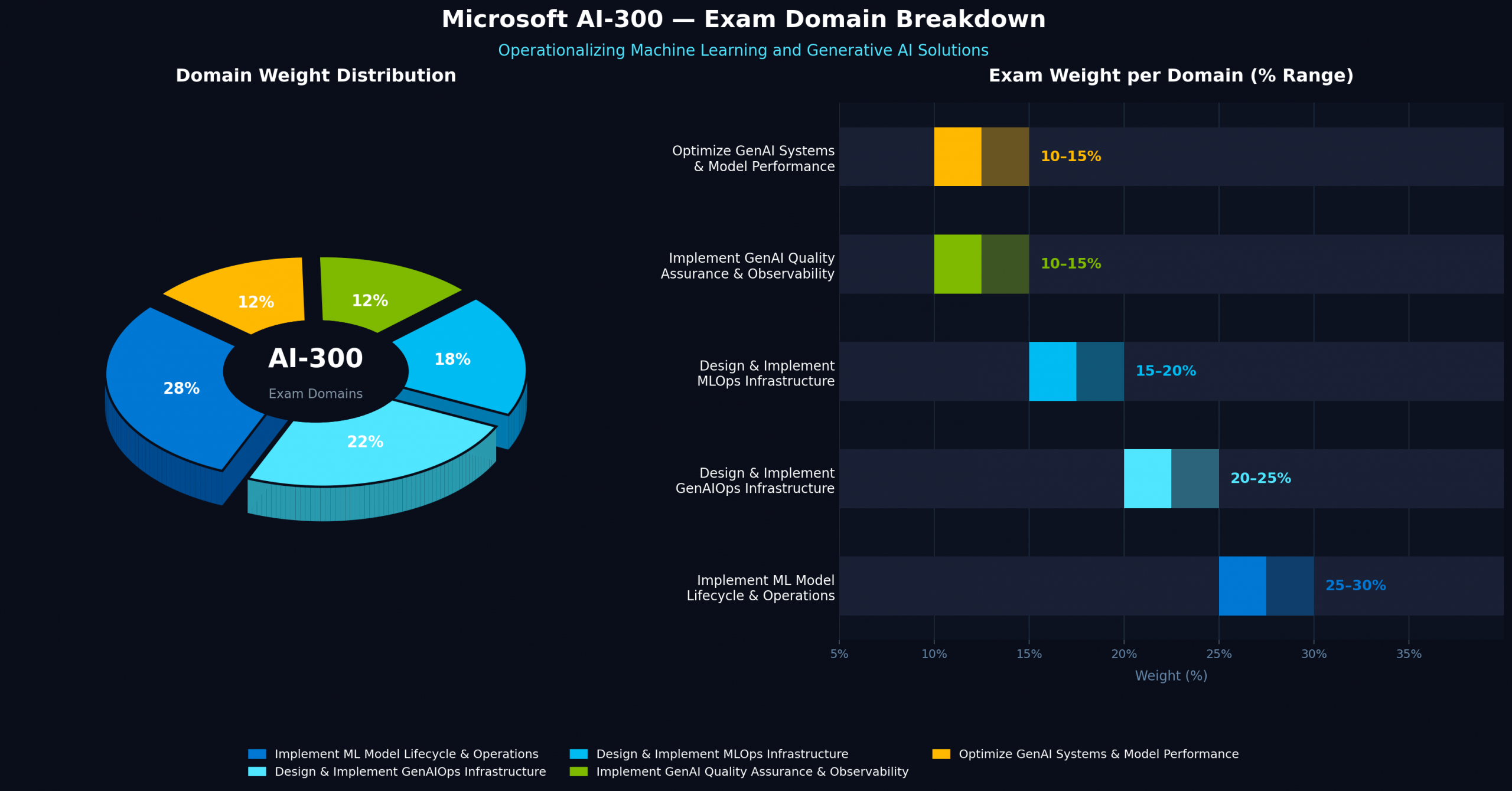

AI-300 Exam Domains

Below are the exam domains, or “Skills Measured,” for the AI-300 Microsoft Certified Machine Learning Operations (MLOps) Engineer Associate certification exam. These domains represent the core competencies that candidates are expected to demonstrate when designing, implementing, and managing machine learning and generative AI operational workflows within the Microsoft ecosystem.

- Design & implement MLOps infrastructure — 15–20%

- Implement ML model lifecycle & operations — 25–30%

- Design & implement GenAIOps infrastructure — 20–25%

- Implement generative AI quality assurance & observability — 10–15%

- Optimize generative AI systems & model performance — 10–15%

AI-300 Study Materials

Before attempting the AI-300 Microsoft Certified Machine Learning Operations MLOps Engineer Associate exam, it is highly recommended to review the following study materials. These resources are designed to help candidates understand the key concepts, tools, and services that are commonly evaluated in the certification. By studying these materials in advance, candidates can strengthen their knowledge of machine learning operations, automation, and deployment practices within the Microsoft ecosystem.

- Tutorials Dojo’s Azure Cheat Sheets — Quick reference guides that summarize key Azure services and concepts commonly covered in certification exams.

- Azure PlayCloud Hands-On Labs — Guided labs that allow you to practice deploying and managing Azure resources in a real environment.

- Azure Practice Exams — Our very own set of practice exams designed to help evaluate your readiness for the certification and identify topics that require further study.

- Microsoft Official Documentation and Learning Resources:

- Artificial Intelligence overview

- Generative AI documentation

- Microsoft 365 Copilot documentation

- Microsoft 365 documentation

- Microsoft Q&A | Microsoft Docs

- Microsoft 365 Copilot community hub

Microsoft 365 community hub - Microsoft Learn – Microsoft Tech Community

- Exam Readiness Zone

- Microsoft Learn Show

- Azure Blog

- Azure Free Account — Enables you to explore Azure services and gain hands-on experience while preparing for the exam.

Azure Services to Focus on for the AI-300 Exam

Here is the list of Azure services that you have to focus on for your upcoming AI-300 Microsoft Certified Machine Learning Operations MLOps Engineer Associate:

Azure Machine Learning

- Workspaces, datastores, compute targets, environments

- Data assets, components, and model registries

- MLflow experiment tracking

- Automated ML (AutoML) and hyperparameter tuning

- Real-time and batch endpoints (managed inference)

- Data drift detection and production monitoring

Microsoft Foundry

- Configure Foundry resources and project environments

- Deploy foundation models using serverless endpoints and managed compute

- Manage prompt versioning and model deployment strategies

- Monitor AI applications with metrics such as latency, throughput, and token usage

- Implement evaluation metrics such as groundedness, relevance, coherence, and fluency

Azure Networking & Security

- Private networking and network access restrictions for ML workspaces

- Managed identities and RBAC for both Machine Learning and Foundry

- Identity and access management (IAM) configuration

Azure Infrastructure as Code (IaC)

- Bicep templates for deploying ML workspaces and Foundry resources

- Azure CLI for resource provisioning and automation

GitHub & DevOps Tooling

- GitHub Actions workflows for automated resource provisioning and training pipelines

- Git-based source control for ML projects and prompt versioning

- GitHub integration with Azure Machine Learning for secure access

AI-300 Key Exam Topics by Domain

Design and Implement an MLOps Infrastructure

- Create and manage Azure Machine Learning workspaces, datastores, and compute resources

- Manage ML assets such as datasets, environments, and reusable components

- Configure identity, access control, and network restrictions for ML environments

- Deploy infrastructure using Bicep and Azure CLI

- Automate provisioning and integrate source control using GitHub Actions and Git

Implement Machine Learning Model Lifecycle and Operations

- Track experiments and manage training workflows using MLflow

- Use Automated ML, notebooks, and scripts for model development and tuning

- Build training pipelines and compare model performance across runs

- Register, version, and manage models throughout the lifecycle

- Deploy models to real-time or batch endpoints and monitor performance in production

Design and Implement a GenAIOps Infrastructure

- Configure Microsoft Foundry resources and project environments

- Implement identity management, RBAC, and private networking for AI systems

- Deploy foundation models using serverless endpoints or managed compute

- Manage model versioning and scale workloads using provisioned throughput

- Implement prompt development and version control using Git repositories

Implement Generative AI Quality Assurance and Observability

- Evaluation metrics: groundedness, relevance, coherence, fluency

- Measure output quality using metrics such as groundedness, relevance, coherence, and fluency

- Implement safety checks and risk evaluation for harmful content

- Monitor operational metrics, including latency, throughput, and response times

- Track token usage, enable logging, and configure diagnostics for troubleshooting

Optimize Generative AI Systems and Model Performance

- Improve RAG systems by tuning retrieval strategies and similarity thresholds

- Optimize chunking strategies and retrieval configurations for better responses

- Fine-tune embedding models for domain-specific applications

- Use hybrid retrieval methods combining semantic and keyword search

- Evaluate improvements using relevance metrics and testing frameworks

AI-300 Important Skills to Focus on

- MLOps Infrastructure Setup — configure Azure Machine Learning workspaces, compute resources, datastores, and ML assets while implementing secure access, networking controls, and Infrastructure as Code using Bicep and Azure CLI.

- Machine Learning Model Lifecycle Management — train, track, register, deploy, and monitor machine learning models using tools such as MLflow, Automated ML, and managed inference endpoints.

- GenAIOps Implementation — deploy and manage generative AI solutions using Microsoft Foundry, including foundation model deployment, prompt management, and scalable inference configurations.

- AI Quality Evaluation and Observability — implement evaluation workflows for generative AI systems using metrics such as groundedness, relevance, and coherence while monitoring operational metrics like latency, throughput, and token usage.

- Generative AI Optimization Techniques — improve system performance through RAG optimization, embedding model selection, fine-tuning strategies, and performance testing.

- MLflow — heavily used for experiment tracking, model registration, versioning, and managing the machine learning lifecycle.

- RAG optimization — focuses on improving retrieval-augmented generation performance by tuning retrieval strategies, similarity thresholds, and chunking methods.

- Responsible AI evaluation — emphasizes assessing models using responsible AI principles, including fairness, safety checks, and evaluation metrics for trustworthy AI systems.

- Managed identities + RBAC — key security mechanisms for controlling authentication and access across both MLOps and GenAIOps environments.

Validate Your AI-300 Exam Readiness

If you feel confident after going through the suggested materials above, it’s time to put your knowledge of different Azure concepts and services to the test. For top-notch practice exams, consider using the Tutorials Dojo’s AI-300 Microsoft Certified Machine Learning Operations MLOps Engineer Associate Practice Exams.

These practice tests cover the relevant topics that you can expect from the real exam. It also contains different types of questions, such as single-choice, multiple-response, hotspot, yes/no, and drag-and-drop. Every question on these practice exams has a detailed explanation and adequate reference links that help you understand why the correct answer is the most suitable solution. After you’ve taken the exams, it will highlight the areas you need to improve. Together with our cheat sheets, we’re confident that you’ll be able to pass the exam and have a deeper understanding of how Azure works.

AI-300 Sample Practice Test Questions:

Question 1

You need to analyze customer satisfaction from e-commerce platforms by processing text data from surveys and reviews to determine sentiment and extract insights. The solution must leverage natural language processing (NLP) to classify the feedback effectively.

Which Azure AI service should you use?

1. Azure AI Language

2. Azure AI Content Safety

3. Azure AI Document Intelligence

4. Azure AI Speech

Question 2

You are using the Azure AI Custom Vision service to train an image classifier for a food retailer. The classifier will categorize images of products into categories such as fruits, vegetables, and beverages. After training the model, you need to evaluate its performance to ensure that the predictions are accurate.

Which two built-in metrics does Azure AI Custom Vision provide for the performance evaluation of the classifier? (Select TWO)

- F1-Score

- Precision

- Recall

- Confusion matrix

- Precision-recall curve

For more Azure practice exam questions with detailed explanations, check out the Tutorials Dojo Portal:

Final Remarks

Success in the AI-300 exam requires both conceptual knowledge and practical experience implementing MLOps and GenAIOps solutions on Azure. Focus your preparation on the official Microsoft Learn materials and reinforce your understanding through hands-on practice with Azure Machine Learning, Microsoft Foundry, GitHub Actions, Bicep, and Azure CLI. Practice exams are also helpful for assessing your readiness and identifying areas that need further improvement. By following this focused study approach, you will be well-prepared to earn the Microsoft Certified Machine Learning Operations (MLOps) Engineer Associate certification. Good luck with your preparation!