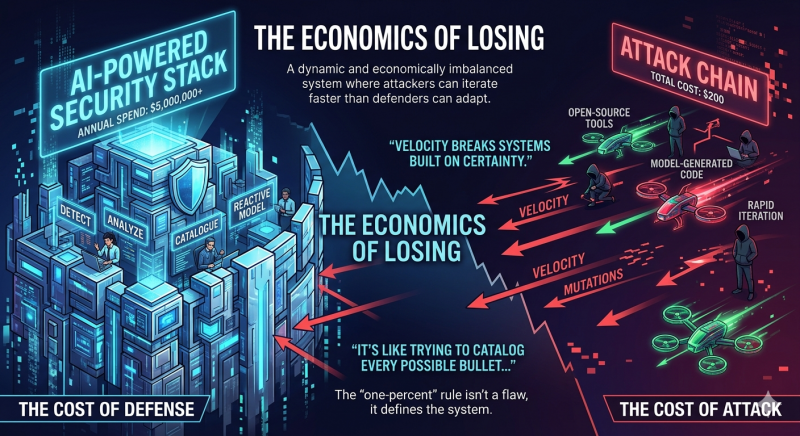

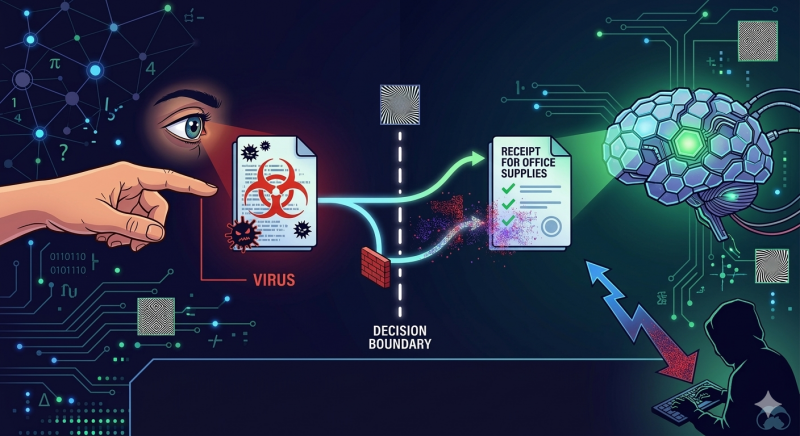

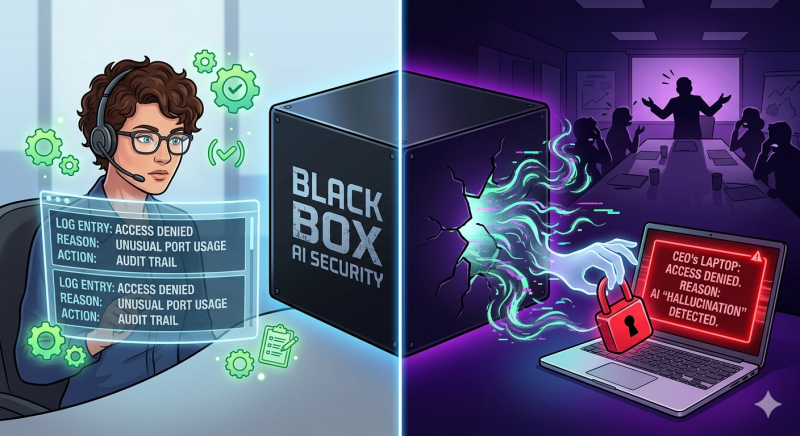

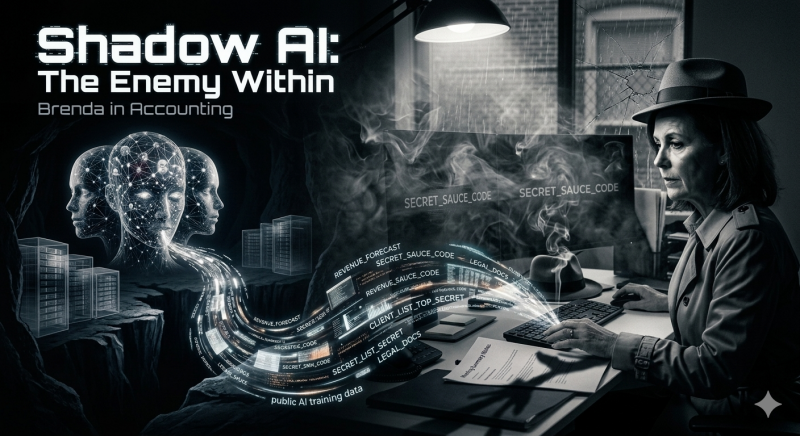

In a world drowning in AI hype, we’ve been sold a sedative: the idea that a “Perfect AI Security System” is just around the corner. We’re imagining a digital god that predicts every breach, slams every door, and lets us all sleep through the night. It’s a lie, A “perfect” AI security system will never exist. Not because the tech isn’t getting smarter, but because the math of the universe is stacked against the defender. Here is why your “unbreakable” AI is actually a glass house. Cybersecurity is the only war where the defender has to be right 100% of the time, but the attacker only has to be right once. AI didn’t solve this asymmetry but instead, it industrialized it. What used to take skill, patience, and time now takes a prompt. A decade ago, writing functional malware required deep knowledge of systems, networking, and exploitation. Today however, a bored teenager with a cheap GPU and access to an LLM can generate thousands of polymorphic variants in hours. As a result, each one is just novel enough to slip past signature-based detection. Defense, meanwhile, hasn’t changed at its core. It still depends on coverage, visibility, and prediction. You have to catch everything: every payload, every anomaly, every edge case. Because of this, miss one, and that’s your breach. So the math gets worse. An attacker can automate reconnaissance, phishing campaigns, vulnerability discovery, and exploit generation simultaneously. They don’t need perfection—just probability. A 0.1% success rate is enough when you’re firing at scale. Defenders, on the other hand, absorb the full cost of that scale. More alerts. More false positives. More noise. More analysts burning out trying to separate signal from chaos. Every new defensive layer adds complexity, and complexity itself becomes an attack surface. We removed the barrier to make it easier for entry In reality, the economics are upside down as well. A company can spend $5M a year on an “AI-powered security stack” and still lose to a $200 attack chain stitched together from open-source tools and model-generated code. Not because the tools are bad, but because the model of defense is fundamentally reactive. We’re trying to detect what has already been created, already been launched, already been mutated. It’s like trying to catalog every possible bullet in a world where anyone can manufacture new ones on demand. Ultimately, this is the real shift AI introduced: not intelligence, but velocity. As a result, velocity breaks systems built on certainty. The uncomfortable truth is that there is no such thing as a perfect AI security system and not because the models aren’t good enough, but because the problem itself is adversarial, dynamic, and economically imbalanced. AI doesn’t “think” it does high-speed pattern matching instead. And patterns can be tricked. Hackers use Adversarial Machine Learning to find the “blind spots” in an AI’s vision. Using adversarial machine learning, they probe systems, map decision boundaries, and learn exactly how inputs are classified. Then they make microscopic changes imperceptible noise to push malicious inputs across that boundary. Think of it like an optical illusion. By adding a few invisible pixels of “noise” to a malicious file, they can make it look like a harmless PDF invoice to your AI. A payload doesn’t need to change its function but It just needs to change how it looks to the math. By contrast, it’s clearly a virus to a human. However, the AI reads it as a receipt for office supplies. If you trick the math, the system doesn’t just fail but it stays silent because it thinks everything is fine. In effect, your “perfect” guard just invited the burglar in for tea because he was wearing a delivery guy’s hat. When a human firewall makes a mistake, you can ask them why. You can audit the logs and fix the logic. When an AI security system fails, nobody knows why. We are moving toward “unexplainable security.” AI models are “Black Boxes” even the people who built them can’t always explain why the machine flagged one file but ignored another. This leads to False Positives: your AI might suddenly decide to block the CEO’s laptop during a billion-dollar board meeting because it “hallucinated” a threat. That’s not a security feature; it’s a self-inflicted attack on your own productivity. In practice, the biggest hole in your “perfect” system isn’t a shadowy hacker in a hoodie; it’s Brenda from Accounting. While you’re building a digital fortress at the front door, your employees are throwing the company’s “secret sauce” over the back fence. They paste sensitive financial data, legal docs, or “top secret” code into public AI tools to “summarize a meeting” or “clean up the grammar.” That data is now out in the wild, being used to train the next generation of public AI. You can’t build a shield for a company that is actively leaking its own soul. The industry needs to stop treating security like a Cathedral a static, “perfect” monument that is finished once the last brick is laid. It’s time to treat it like a Jungle. In a jungle, you don’t expect to never get bitten; you carry an antidote. Perfection is a fairy tale told by sales decks to get your budget. Real security is about “Blast Radius.” It’s about accepting that your “Perfect AI” is going to get punched in the mouth, and having a plan for how to keep the rest of the body alive when it starts bleeding. In short, resilience beats Perfection. If your strategy relies on an AI being “perfect,” you’ve already lost. The goal isn’t to build a wall that never breaks; it’s to build a system that can survive the break.

The Mirage of the “Perfect” AI Shield: Why Your Security is Still Screwed

The Asymmetry of the “One-Percent”

The Economics of Losing

More importantly, as long as attackers can iterate faster than defenders can adapt, the imbalance persists.

In other words, the “one-percent” rule isn’t a flaw, it defines the system.Adversarial Math: Tricking the “Brain”

The “Black Box” Hallucination

Shadow AI: The Enemy Within

Stop Building Cathedrals, Start Living in the Jungle

Sources (for the skeptics):

Foundations & Security Models

National Institute of Standards and Technology. (2018). Framework for Improving Critical Infrastructure Cybersecurity (Version 1.1).

https://www.nist.gov/cyberframeworkCybersecurity and Infrastructure Security Agency. (2023). Zero Trust Maturity Model.

https://www.cisa.gov/zero-trust-maturity-model

Breach Data & Economics

Verizon. (2024). 2024 Data Breach Investigations Report.

https://www.verizon.com/business/resources/reports/dbir/IBM. (2024). Cost of a Data Breach Report 2024.

https://www.ibm.com/reports/data-breachWorld Economic Forum. (2024). Global Cybersecurity Outlook.

https://www.weforum.org/reports/global-cybersecurity-outlook/RAND Corporation. (2015). The Defender’s Dilemma: Charting a Course Toward Cybersecurity.

https://www.rand.org/pubs/research_reports/RR1024.htmlAdversarial AI & Model Exploitation

MITRE. (2023). ATLAS: Adversarial Threat Landscape for Artificial-Intelligence Systems.

https://atlas.mitre.org/Google Research. (2015). Explaining and Harnessing Adversarial Examples.

https://arxiv.org/abs/1412.6572OpenAI. (2023). Safety & Security Research.

https://openai.com/researchAI Limitations & Black Box Problem

Stanford University. (2023). Holistic Evaluation of Language Models (HELM).

https://crfm.stanford.edu/helm/latest/DARPA. (2020). Explainable Artificial Intelligence (XAI) Program.

https://www.darpa.mil/program/explainable-artificial-intelligenceMachine Learning. (2017). Feature Visualization (Distill).

https://distill.pub/2017/feature-visualization/Human Factor & Shadow AI

Gartner. (2024). Managing Shadow IT and AI Risk.

https://www.gartner.com/en/information-technologyOWASP. (2023). Top 10 Risks for Large Language Model Applications.

https://owasp.org/www-project-top-10-for-large-language-model-applications/

Perfect AI Security Doesn’t Exist

AWS, Azure, and GCP Certifications are consistently among the top-paying IT certifications in the world, considering that most companies have now shifted to the cloud. Earn over $150,000 per year with an AWS, Azure, or GCP certification!

Follow us on LinkedIn, YouTube, Facebook, or join our Slack study group. More importantly, answer as many practice exams as you can to help increase your chances of passing your certification exams on your first try!

View Our AWS, Azure, and GCP Exam Reviewers Check out our FREE coursesOur Community

~98%

passing rate

Around 95-98% of our students pass the AWS Certification exams after training with our courses.

200k+

students

Over 200k enrollees choose Tutorials Dojo in preparing for their AWS Certification exams.

~4.8

ratings

Our courses are highly rated by our enrollees from all over the world.