Last updated on December 2, 2025

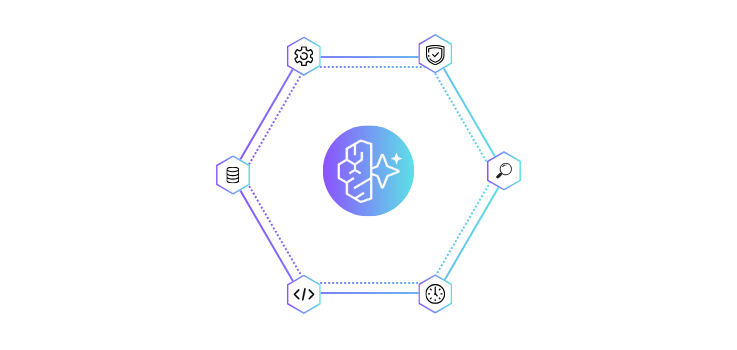

Imagine spending months building an AI agent that integrates seamlessly into your development environment. It employs tools, responds to inquiries, and completes duties precisely as planned. But moving it to production becomes a nightmare: suddenly, you are dealing with scale problems, security concerns, authentication systems, and API interfaces. What should have been a simple deployment becomes weeks of infrastructure labor unrelated to your agent’s intelligence. This production gap is the bane of countless developers, and it is precisely the problem Amazon Bedrock AgentCore was built to stop. The Amazon Bedrock AgentCore is an all-in-one platform for deploying and operating AI agents across any framework and model. As the push toward AI agents intensifies, organizations must balance autonomy and scale with the strict security, reliability, and governance demands of enterprise environments. AgentCore helps developers bridge the critical gap between proof-of-concept and production. It acts as the bridge: you bring your agent logic (built in LangGraph, CrewAI, etc.), and AgentCore handles the security, scaling, and integration. Building an AI agent that works locally is one challenge; making it production-ready is an entirely different feat. Developers face critical obstacles: AgentCore eliminates this gap. Whether your agent uses LangGraph, CrewAI, or custom Python code, AgentCore provides the operational backbone to run it. Let’s clear up a common confusion: Amazon Bedrock and Amazon Bedrock AgentCore are related but serve fundamentally different purposes in the AI development ecosystem. Amazon Bedrock is AWS’s fully managed generative-AI service. It provides access to high-performing “foundation models” (FMs) from Amazon and third-party AI providers via a unified API. It focuses on the intelligence. Amazon Bedrock AgentCore is the production infrastructure for AI agents. It focuses on lifecycle management—deploying, operating, and scaling agents in real-world environments. Using Bedrock and AgentCore together is common because they serve complementary layers. You use Bedrock for your agent’s core intelligence (the model) and AgentCore to deploy and operate that agent, ensuring it runs securely and scales efficiently with any framework. The Bottom Line: Bedrock gives you the intelligence; AgentCore gives you the operations. One provides the brain, the other provides the nervous system that lets that brain interact with the real world. AgentCore organizes its seven interconnected services into three main production stages: Deploy, Enhance, and Monitor. The foundation of the platform is a secure environment that takes your code from a local repository to a global scale. Once deployed, agents need tools to remember context and interact with the real world. You cannot improve what you cannot measure. This layer provides the visibility needed to maintain reliability. Instead of stitching together seven different vendors, AgentCore provides a unified flow: Amazon Bedrock AgentCore is designed to solve complex production challenges. Here is how organizations are utilizing these capabilities: The impact of AgentCore extends across different roles and organizational levels, each experiencing unique advantages. AgentCore removes the “infrastructure tax” from AI development. Previously, only tech giants with massive DevOps teams could run sophisticated agents at scale. Now, a two-person startup can deploy the same production-grade agents as a Fortune 500 company. This service fundamentally shifts the workflow, allowing teams to focus entirely on developing intelligent behaviors rather than maintaining servers. AgentCore solves the “how it runs” issue, freeing developers to focus solely on “what it does” and fostering innovation across every industry. Reading about infrastructure is one thing, but seeing an agent go from “localhost” to a production-grade secure URL in minutes is where the real magic happens. To help developers bypass the initial learning curve, AWS provides the AgentCore Starter Toolkit. The Starter Toolkit is a Python-based utility that handles the heavy lifting of initial configuration. This CLI utility handles the heavy lifting, allowing you to scaffold a new project, test locally with an emulator, and deploy to the Runtime with a single command. 📝 Note: The following code blocks are simplified snippets designed to demonstrate the developer experience and workflow. They are not intended to be a complete step-by-step tutorial. For full implementation details, prerequisites, and runnable examples, please refer to the Official Quick-Start Guides linked at the end of this section. 1. Install Dependencies You need the SDK, the Toolkit, and a framework to build your agent logic. The official guide uses Strands (an open-source agent framework). 2. Create the Agent Code The magic lies in the # Initialize the runtime application # Initialize your agent using the Strands framework # The @app.entrypoint decorator exposes this function to the Runtime if __name__ == “__main__”: 3. Define Requirements The runtime needs to know what libraries to install in the cloud. Create a 4. Configure and Deploy Now, use the CLI to package everything. The # 2. Deploy to AWS Cloud (builds container & provisions runtime) To build this yourself, we highly recommend following the official documentation. These resources provide step-by-step instructions for getting your first agent running. Best for: A rapid, code-first introduction to the CLI and project structure. Best for: A comprehensive deep dive into prerequisites, permissions, and advanced configuration. By following these guides, you will see firsthand how AgentCore abstracts away the complexity of the “Deploy, Enhance, Monitor” cycle, letting you focus entirely on your agent’s logic. Amazon Bedrock AgentCore represents a decisive shift in how we build AI: moving from experimental chatbots to autonomous, production-grade agents. By standardizing the “Deploy, Enhance, Monitor” lifecycle, organizations can bypass months of custom DevOps work and achieve day-one compliance and reliability. Whether you are a startup scaling your first agent or an enterprise modernizing legacy workflows, AgentCore provides the operational backbone necessary to succeed. It creates a clear division of labor: AWS handles the body, the scale, security, and connections, so your team can focus entirely on the intelligence. The infrastructure is no longer an obstacle; it is a commodity. Now, the only limit is your logic.

What is Amazon Bedrock AgentCore?

The Prototype-to-Production Problem

Amazon Bedrock vs. Amazon Bedrock AgentCore

Understanding Amazon Bedrock

Understanding Amazon Bedrock AgentCore

Why and How They Work Together

Feature

Amazon Bedrock

Amazon Bedrock AgentCore

Primary Role

The Brain (Intelligence)

The Body (Operations & Infrastructure)

What it Provides

Foundation Models (Claude, Llama, Titan) via unified API

Secure Runtimes, Memory, Identity, and Tool Gateways

Best for

Accessing models and simple, managed agent workflows

Deploying, securing, and scaling complex custom agent code

Flexibility

Model-focused (Model-as-a-Service)

Framework-agnostic (Works with LangGraph, CrewAI, etc.)

Core Capabilities and Key Services

Deploy: From Development to Production

Enhance: Powering Your Agents

Monitor: Maintaining Production Quality

How These Components Work Together

AgentCore Common Use Cases & Real-World Applications

Integrate with Existing Systems

Enhance Functionality with Built-in Tools

Implement Conversational Memory

Monitor and Optimize Performance

Benefits Across the Board

👩💻 For Developers

🏢 For Organizations

🌐 For Enterprises

The Bigger Picture: Standardized AI Production

Getting Started with Amazon Bedrock AgentCore

What is the AgentCore Starter Toolkit?

Prerequisites

BedrockAgentCoreApp. This wrapper turns your local agent code into a production-ready API compatible with the serverless runtime. Create a file named agent.py.

from strands import Agent

app = BedrockAgentCoreApp()

# (By default, this uses Claude Sonnet if configured in your environment)

agent = Agent()

@app.entrypoint

def invoke(payload):

“””

Main entry point. Receives JSON payload from the Runtime.

“””

# Extract the user’s prompt from the request

user_message = payload.get(“prompt”, “Hello!”)

# Run the agent logic

result = agent(user_message)

# Return the result in a structured format

return {“result”: result.message}

# Allows you to test this script locally before deploying

app.run()requirements.txt file in the same folder:

strands-agentsconfigure command reads your python file and automatically generates the Dockerfile and IAM permissions for you.

agentcore configure –entrypoint agent.py

agentcore launchReady to dive deeper?

The Future of Production AI

Want contents like this? Check out these articles:

References